SEOSOON AI Crawler Study: Is Your Server Blocking AI Bots?

Empirical analysis of server-level AI bot blocking on 1,444 German websites | March 2026

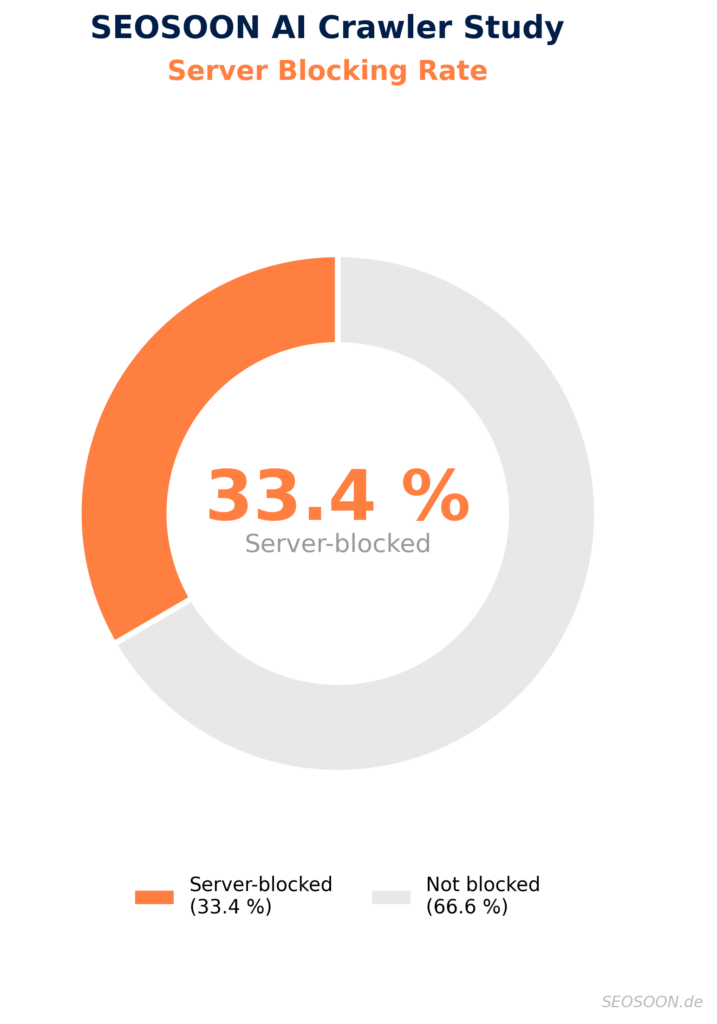

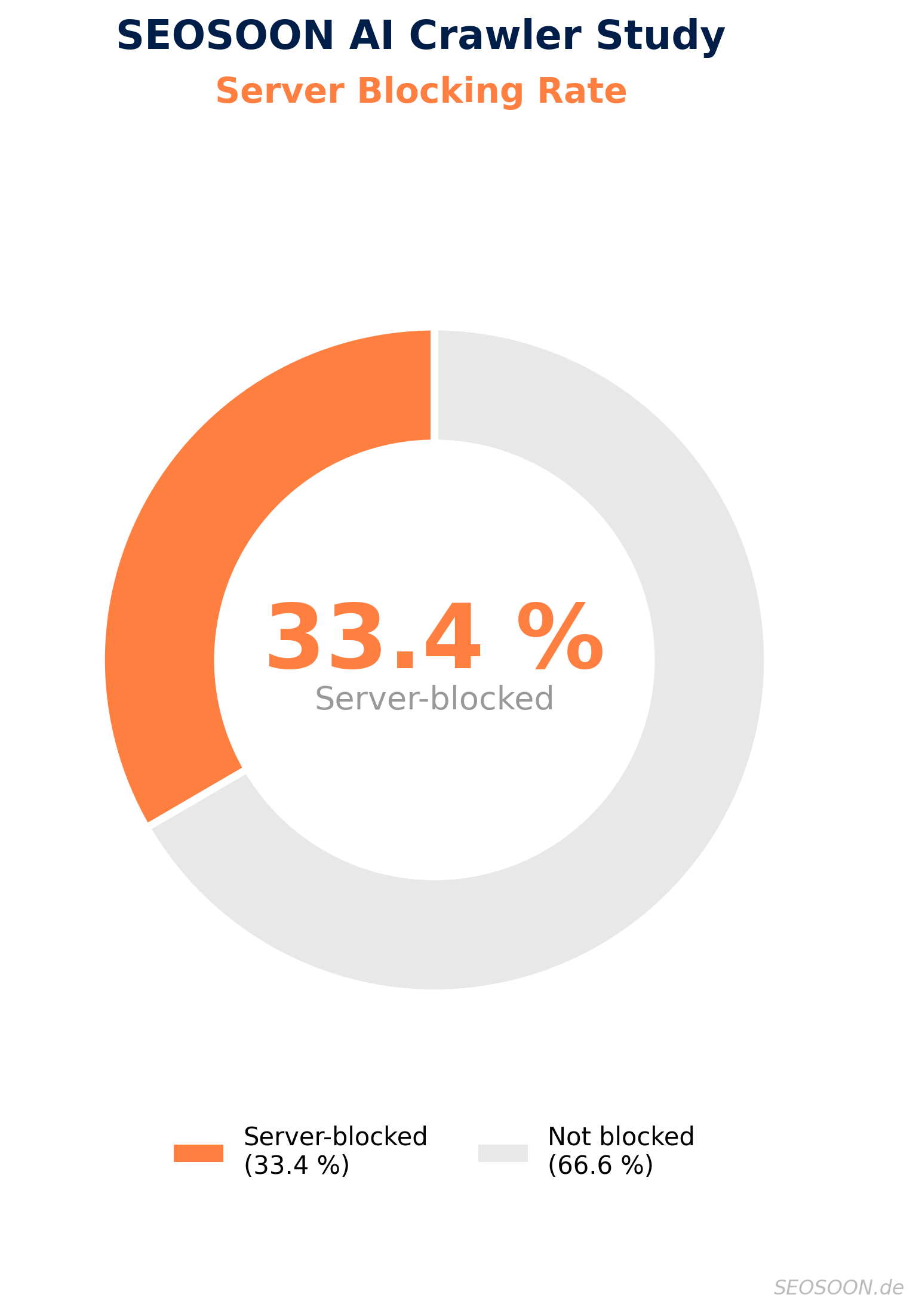

One in three German websites blocks AI bots at server level without the site owner even knowing. That is the finding of the SEOSOON AI Crawler Study, which analyzed 1,444 verified .de domains. 33.4% return an HTTP error code to AI crawlers, while the same page loads perfectly fine in a regular browser.

The key insight: The pattern points to the hosting provider, not the website operator. 27.5% of all domains block only training bots via HTTP, while search and assistant bots get through. Identical blocking profiles across dozens of domains on the same provider prove that the block is enforced at infrastructure level. The dentists, bakers and tradespeople of this country have no idea.

The SEOSOON AI Crawler Study focuses on the three most relevant AI providers: OpenAI, Anthropic and Perplexity (OAP). Their bots are used by most users and are crucial for AI visibility.

Methodology: What actually happens when an AI bot knocks?

The SEOSOON AI Crawler Study measures the actual behavior of web servers: Each domain was requested using 12 AI bot user agents and 3 browser UAs (Chrome, Firefox, Safari) as a control group. A bot is considered server-blocked when the browser receives status 200 but the bot receives an HTTP error code.

1,444 verified .de domains from four nationwide sources (Yellow Pages, Tranco ranking, CommonCrawl, curated lists), manually validated via Chrome Extension for reachability and authenticity — 100% real and reachable.

The 12 tested AI bots: Training bots (GPTBot, ClaudeBot, PerplexityBot, Bytespider, CCBot, Applebot-Extended, Meta-ExternalAgent) scrape data for model training. Search bots (OAI-SearchBot, Claude-SearchBot) deliver results for AI search engines. Assistant bots (ChatGPT-User, Claude-User, Perplexity-User) fetch content live (grounding). Why is Google missing? Gemini uses the same Googlebot as traditional search — blocking it separately is technically not possible.

Result 1: One in three websites blocks AI bots at server level

482 out of 1,444 domains (33.4%) block at least one of the 12 tested AI bots server-side. The server returns an HTTP error code to the AI crawler, while the same page is fully accessible via a regular browser user agent.

| Metric | Domains | Share |

|---|---|---|

| At least 1 bot server-blocked | 482 | 33.4% |

| At least 1 OAP bot server-blocked | 264 | 18.3% |

| Only training bots blocked (smart blocking) | 397 | 27.5% |

Result 2: OAP Analysis — Server Blocking for OpenAI, Anthropic and Perplexity

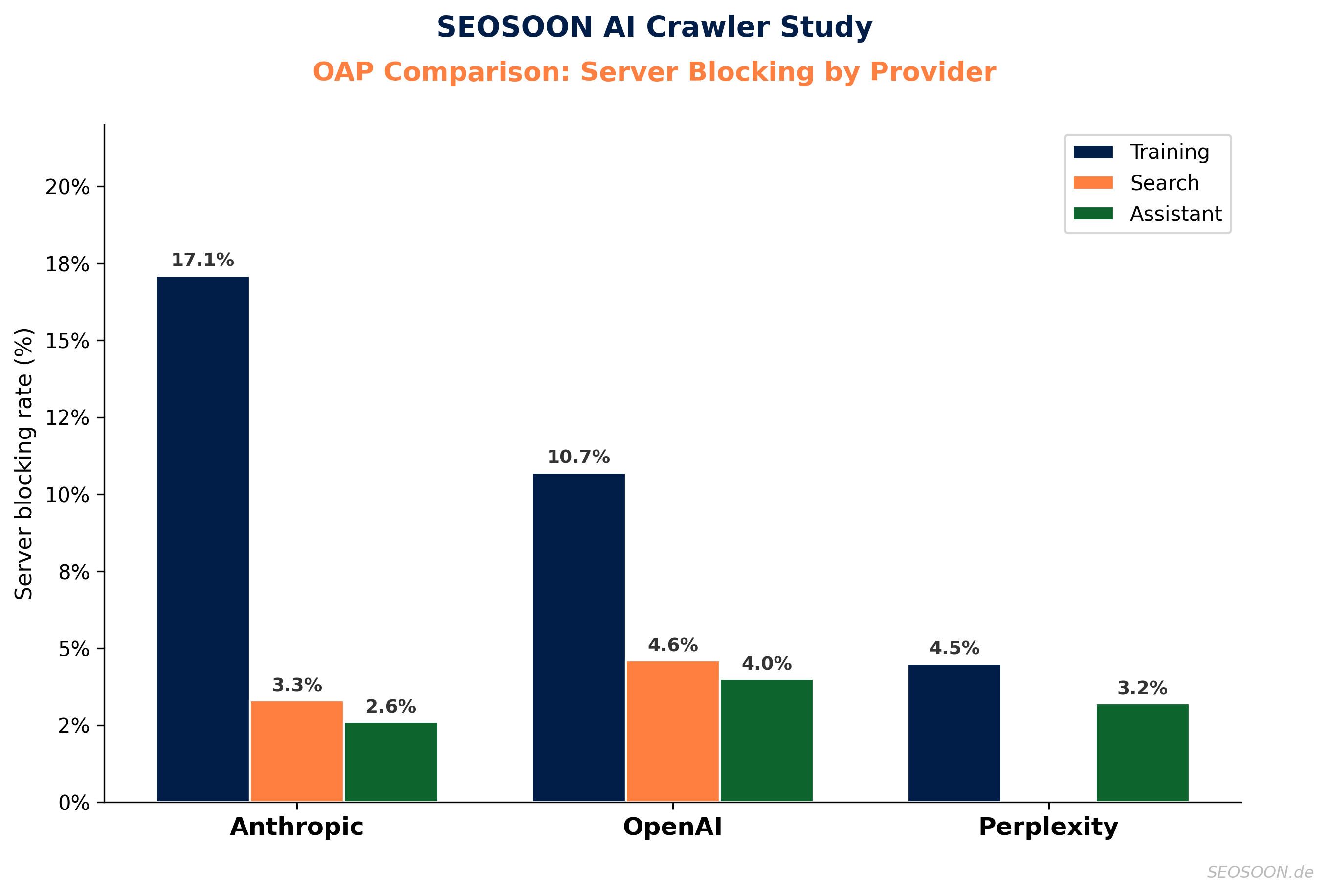

The SEOSOON AI Crawler Study reveals significant differences between the three OAP providers. Across all of them, training bots are blocked 3–5× more often than assistant bots.

(at least 1 bot server-blocked)

(at least 1 bot server-blocked)

(at least 1 bot server-blocked)

| Provider | Training | Search | Assistant | At least 1 bot |

|---|---|---|---|---|

| Anthropic | 17.1% | 3.3% | 2.6% | 17.3% |

| OpenAI | 10.7% | 4.6% | 4.0% | 11.3% |

| Perplexity | 4.5% | – | 3.2% | 4.6% |

ClaudeBot is blocked 60% more often than GPTBot at server level (17.1% vs. 10.7%). Perplexity is blocked least often, possibly because PerplexityBot is less well known and appears less frequently on provider blocklists.

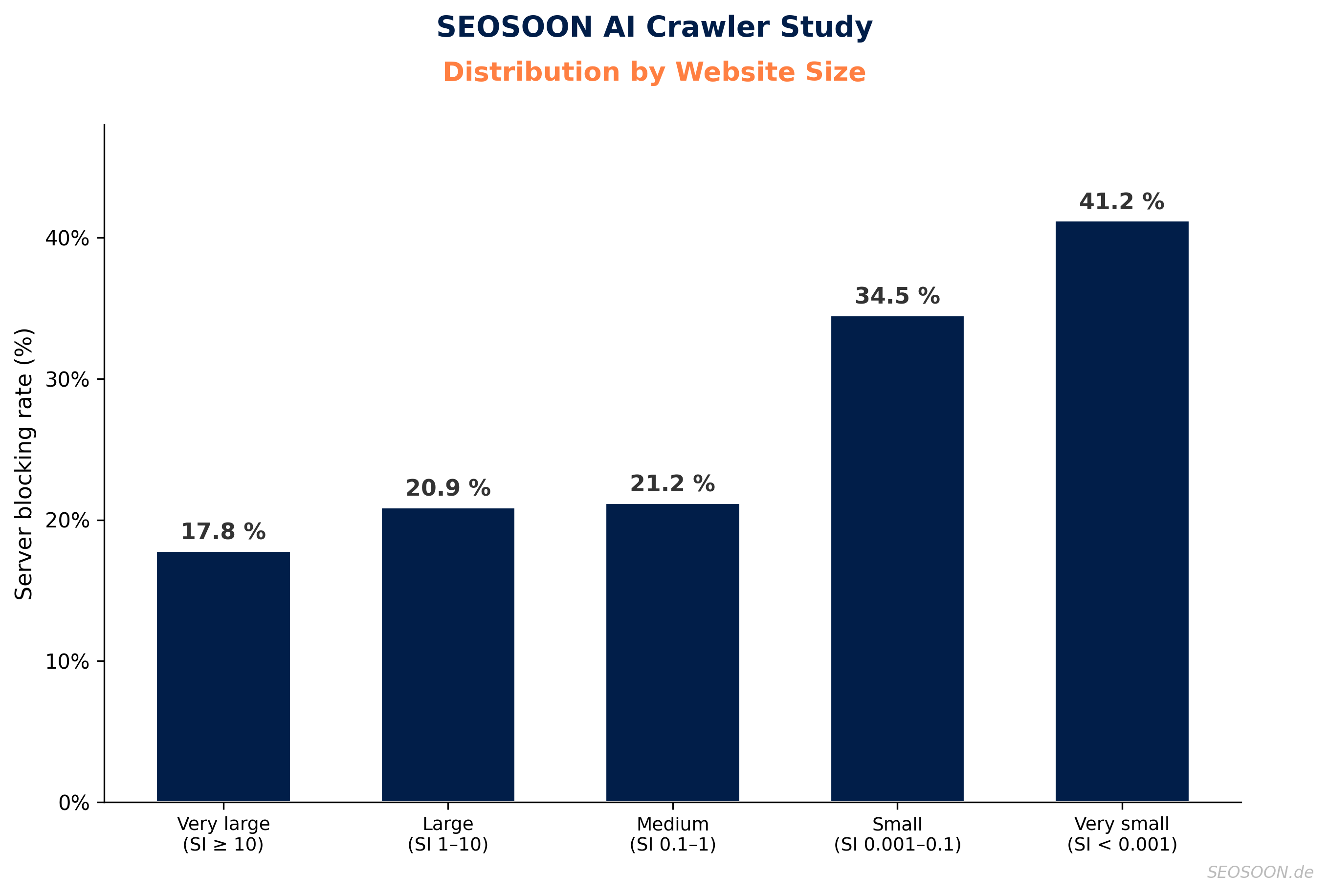

Distribution by website size

The SEOSOON AI Crawler Study shows a clear correlation between website size and server-level blocking:

The smaller the website, the higher the server blocking rate. For very small domains — dental practices, bakeries and trades businesses — the rate is 41.2%. These websites typically use shared hosting packages where the provider has configured AI bot blocking at infrastructure level. The operators usually have no idea that their content is invisible to ChatGPT, Claude and Perplexity.

For very large domains the rate is significantly lower at 17.8%. This does not mean AI bots are welcome there — large publishers and corporations use more sophisticated mechanisms such as IP whitelisting (e.g. under licensing agreements), bot detection and rate limiting.

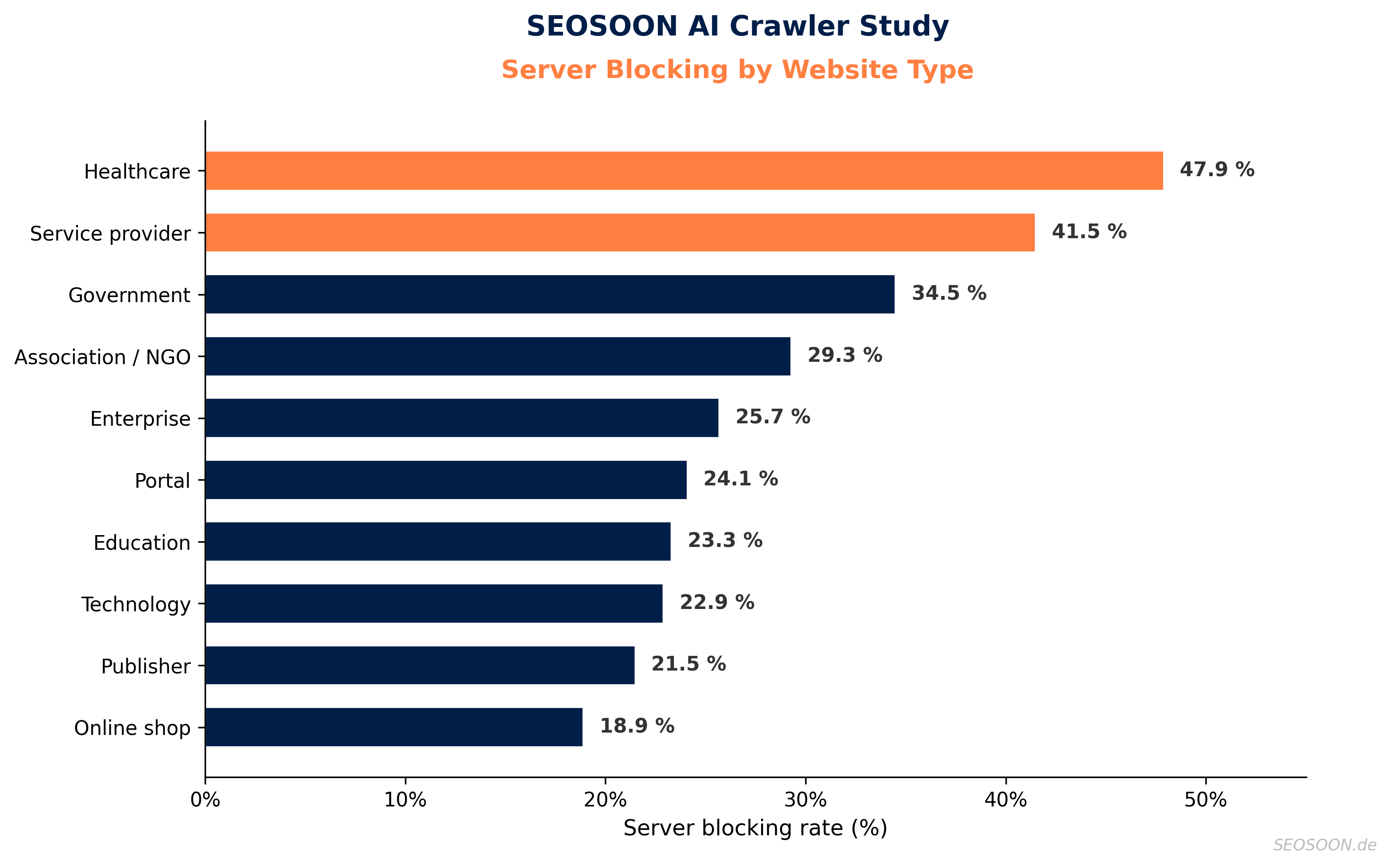

Healthcare and service providers hit hardest

Healthcare websites (47.9%) and service providers (41.5%) are the most heavily server-blocked — precisely the industries that typically use shared hosting and whose operators do not actively manage AI bot access.

Publishers are below average at 21.5% — they primarily block intentionally via robots.txt. Including robots.txt, their rate rises to 73.3%. Online shops block the least (18.9%) — product content in AI results is welcome.

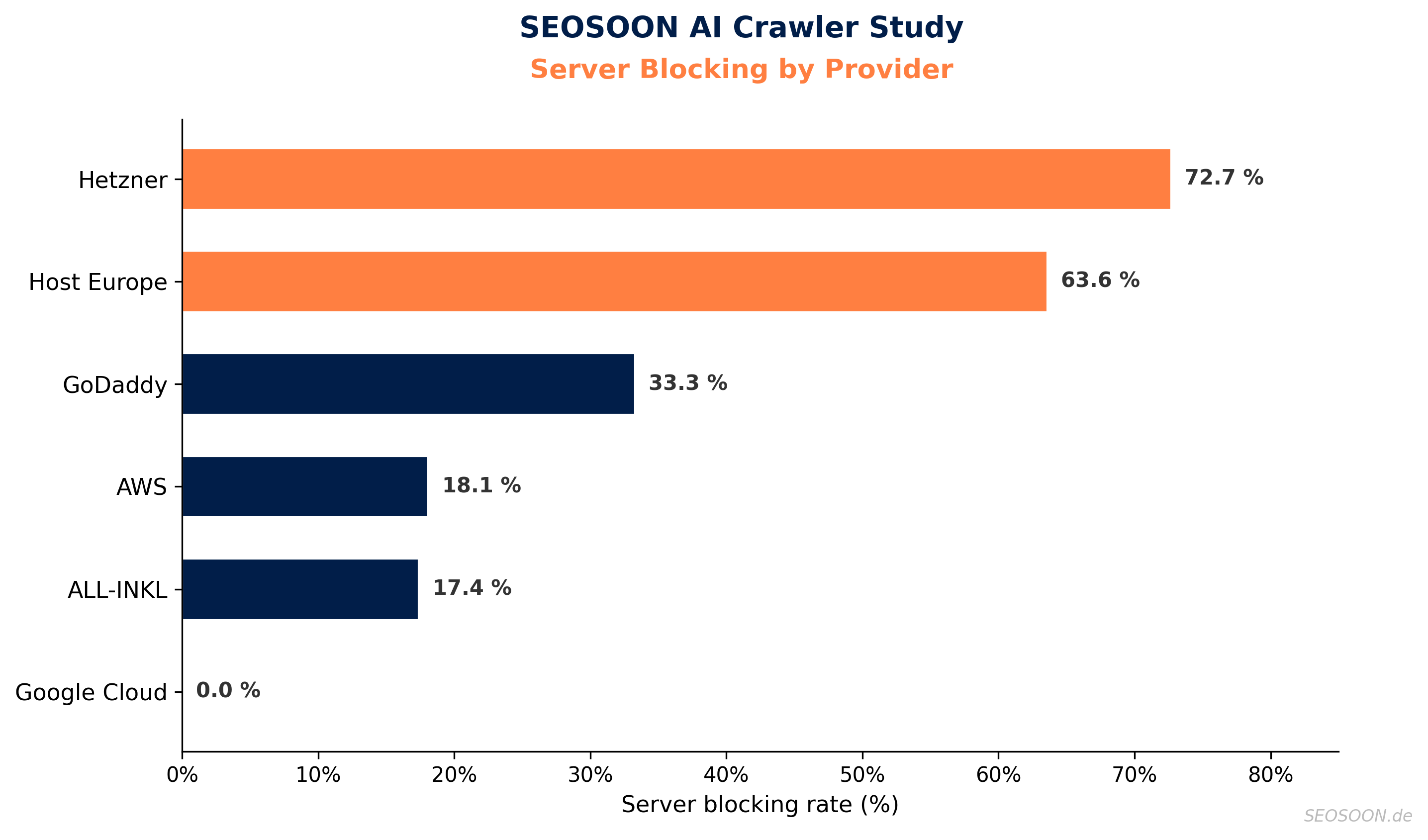

Provider ranking — who blocks at infrastructure level?

When dozens of domains on the same provider show the exact same blocking profile, the block is not individually configured but enforced globally by the provider.

| Provider | Domains | Server-blocked |

|---|---|---|

| Hetzner | 55 | 72.7% |

| Host Europe | 11 | 63.6% |

| GoDaddy | 9 | 33.3% |

| AWS | 72 | 18.1% |

| ALL-INKL | 46 | 17.4% |

| Google Cloud | 23 | 0.0% |

Hetzner (72.7%) and Host Europe (63.6%) stand out — here the provider blocks, not the operator. Google Cloud shows 0% server blocking; blocks there are exclusively intentional via robots.txt.

Conclusion: AI visibility starts at the server

The SEOSOON AI Crawler Study reveals a two-tier landscape of German websites:

Large websites and publishers make deliberate decisions about AI access. They block via robots.txt, negotiate licensing agreements and use IP whitelisting. Whether and which bots get access is a strategic decision.

Small and mid-sized websites — the dentists, bakers, tradespeople and local service providers — are often blocked without their knowledge. Their hosting provider has enabled AI bot blocking at infrastructure level and they have no idea that their content is invisible to ChatGPT, Claude and Perplexity. In a world where AI visibility is becoming increasingly business-critical, this is a problem.

Our recommendation:

- Check your server: Test whether AI bots can actually reach your website. What matters is the HTTP status code, not the robots.txt.

- Contact your provider: Ask specifically about AI bot blocking. Hetzner, Host Europe and other providers block at infrastructure level.

- Decide selectively: Training bots and assistant bots are not the same. If you want to be visible in AI results, you should at least allow assistant bots (ChatGPT-User, Claude-User, Perplexity-User) through.

Including intentional robots.txt blocking, 40% of all German websites block at least one AI bot. Some get creative: qvc.de responds to AI crawlers with HTTP status code 418 — “I’m a Teapot”, an Easter egg from the 90s. The full analysis with all results, provider details and 15 identified status codes is available in the SEOSOON AI Crawler Study.

We have also published an excerpt of the results table with 140 sample domains and the results of all 5 test runs.

Can AI bots reach your website?

Test for free whether ChatGPT, Claude & Co can read your content or whether your server is blocking them.

AI Bot CheckerAbout the SEOSOON AI Crawler Study: Data collection was conducted in March 2026 by SEOSOON.de. All raw data is available. For questions or to use the data in your own publications, please contact us at seosoon.de/kontakt.

Citation: Bettinga, J. (2026). SEOSOON AI Crawler Study: Server-Level Bot Blocking on German Websites. seosoon.de. https://seosoon.de/studie/ai-bot-blocking-study-2026/